AWARD WINNING DIGITAL MARKETING

Canada website design & SEO experts.

Game changing digital marketing services that give your business an edge over the competition.

Digital marketing starts here.

Learn why businesses trust Elite Digital Marketing with their digital assets.

50+ digital experts

10 year track record

100s of clients

Proprietary software

50+ digital experts

At Elite Digital Marketing, we offer a comprehensive list of digital services including website design, search engine optimization, and social media marketing to name a few. If you’re seeking a full-service digital agency that amasses decades of experience and an eye for out-of-the-box thinking then you’ve come to the right place.

10 year track record

Since our inception as a digital marketing agency back in 2016, our team has always aimed to pioneer the use of the latest design techniques and software technology.

100s of clients

Our agency has had the incredible opportunity to work with huge brands across the world including Royal Pizza, ATCO, NAIT and Snap Fitness to name a few. Regardless of your businesses affiliated industry, our team has the know how to develop a winning strategy for your brand and take your digital presence to the next level.

Proprietary software

Our team of digital experts include software engineers who have built a range of custom software solutions for our agency including Elite Chat.

Regardless of your businesses needs, you can relax knowing that our team can create and build just about any custom solution you can dream of.

Where do you need help

The majority of digital marketing issues are commonplace among businesses. Whether you're not recieving enough traffic or want to rebrand completely, we've got you covered.

Have you spent a huge budget on your website only to receive no traffic whatsoever? It’s not uncommon, but will likely require your marketing budget to be redistributed.

Elevate your online success with our specialized website design services. Our team of skilled designers will not only enhance the visual appeal but also optimize the structure, content, and calls-to-action (CTAs) of your site.

Are you receiving traffic on your website but nothing is converting? It may be time to rethink the optics of your marketing campaign as well as your website to ensure everything is optimized accordingly.

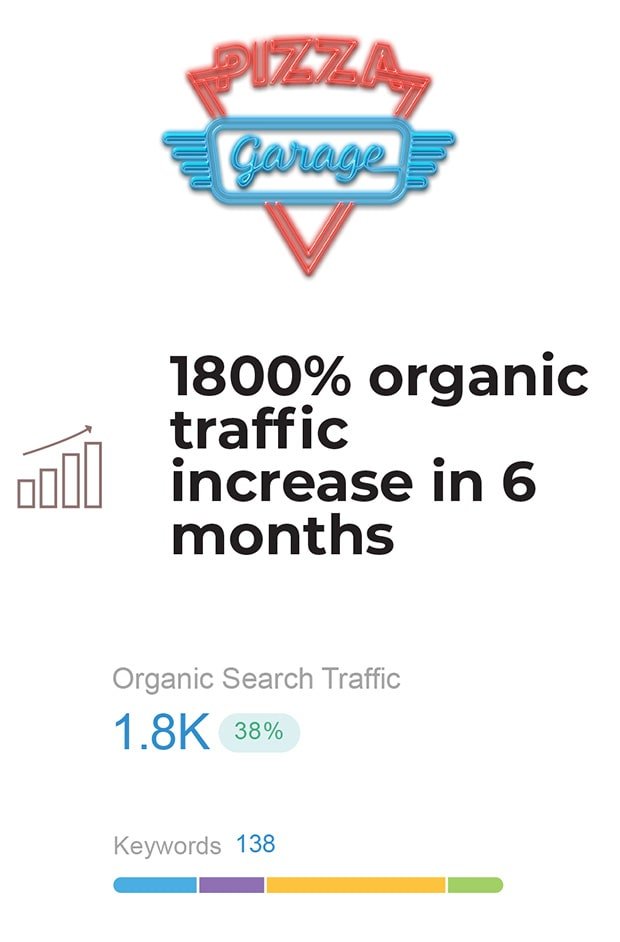

Without consistent site traffic, you’re missing out on valuable visitors, leads, and revenue. Thankfully, custom SEO solutions can get you back on track by boosting your presence in search engine results, so more people can find and visit your site.

Are you in need of a complete overhaul of your businesses branding? Perhaps you’re even starting a completely new business from scratch. Regardless of your needs, the team at EDM has worked with countless businesses and brought them to new heights.

From golf courses to fitness centers to hockey teams, there's not many industries that our team of digital experts haven't helped succeed when it comes to elevating their digital strategy.

Our work speaks for itself

Our best-in-class digital marketing agency impresses customers with impactful results and wows them with stellar customer service.

Super Scoots

With our dedicated efforts, Super Scoots' website has undergone significant improvements, resolving critical issues in ecommerce functionality that hindered seamless transactions.

Town Of Calmar

Our ongoing website design support helps the Town of Calmar maintain an up-to-date digital platform, enhancing community engagement and providing residents and visitors with accurate and...

CP Rail

The CP Rail Web Store simplifies the merchandise ordering process for CP Rail employees, offering a user-friendly platform for easy access to branded apparel and promotional...

Oil Kings Alumni

The Oil Kings Alumni website serves as a vibrant digital hub, uniting former players of the Edmonton Oil Kings hockey team and celebrating the team's storied...

CO2Blast

CO2Blast's revamped website is an informative and engaging digital resource for clients in Western Canada, providing a clear understanding of the benefits of dry ice blasting.

Our digital solutions

Learn why businesses trust Elite Digital Marketing with their digital assets.

At Elite Digital Marketing, we excel in the digital realm, offering a comprehensive range of solutions to enhance your online presence.

From stunning website design to targeted SEO strategies, captivating graphics, and high-quality videos, we cover it all. We even possess extensive expertise in developing personalized software solutions that precisely align with the unique ideas you have envisioned. Whether it’s a concept that has lingered in your mind for a while, our team can transform it into a reality.

Trust us to elevate your business in the digital landscape with our unmatched skills and creative prowess.

Website traffic & lead conversion

Branding & digital assets